Beware of what you wish for

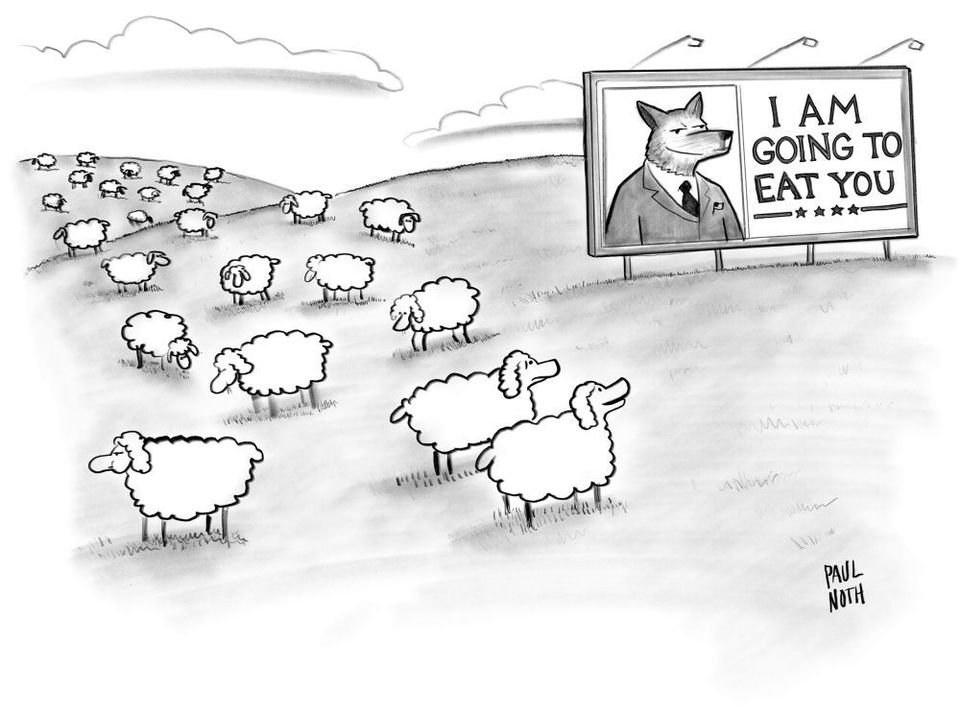

The ethics of AI is a booming business, a key trend for 2020, and a recurring subject for this newsletter. However is this flurry of activity that seeks to bring an ethical framework to AI counter productive? Are AI ethics an example of the cobra effect?

The cobra effect is an analogy frequently used by public speakers and smarty pants, but I’ve only recently been exposed to the anecdote, so forgive me for this first time application. It evokes the idea that the solution to a problem can often make the problem worse. From the wikipedia entry:

The term cobra effect originated in an anecdote, set at the time of British rule of colonial India. The British government was concerned about the number of venomous cobra snakes in Delhi. The government therefore offered a bounty for every dead cobra. Initially this was a successful strategy as large numbers of snakes were killed for the reward. Eventually, however, enterprising people began to breed cobras for the income. When the government became aware of this, the reward program was scrapped, causing the cobra breeders to set the now-worthless snakes free. As a result, the wild cobra population further increased.

The alarm raised over AI, and the relative lack of ethics, was partly a desire to slow down the development of the technology. However the rash of ethical takes on AI and the thought leadership industry flourishing around it, is, if anything, helping to accelerate the development of AI.

As a cobra effect, AI ethics are not making AI ethical, and may even be encouraging the AI industry to be more unethical. AI as the contemporary iteration of might is right.

There’s more talk of artificial intelligence ethics than ever before. But talk is just that—it’s not enough. https://t.co/QDSkQVDuFh

— MIT Technology Review (@techreview) January 12, 2020

But talk is just that—it’s not enough. For all the lip service paid to these issues, many organizations’ AI ethics guidelines remain vague and hard to implement. Few companies can show tangible changes to the way AI products and services get evaluated and approved. We’re falling into a trap of ethics-washing, where genuine action gets replaced by superficial promises. In the most acute example, Google formed a nominal AI ethics board with no actual veto power over questionable projects, and with a couple of members whose inclusion provoked controversy. A backlash immediately led to its dissolution.

I’m not suggesting that the exploration of the ethical issues surrounding AI is a waste of time. Far from it. The recent interest in technology ethics is encouraging critical thought and opening up spaces for more critical voices.

If anything it is making it easier for us to identify when and where bad things happen, either in AI research, or in the deployment of AI. For example when a dating app uses AI to make fake women users so as to fool advertisers (and other users):

a great example of AI ethics in action#artificialintelligence #grift #fraud #dating #Tinder https://t.co/3Hse2wgqGv

— David Cheems Golumbia (@dgolumbia) January 13, 2020

However recognizing or calling out unethical behaviour does not actually stop it. It’s not enough for AI ethics to get more attention, what’s important is that it results in organizational and societal change.

"Simply arguing that your AI platform was a black box that no one understood is unlikely to be a successful legal defense in the 21st century. It will be about as convincing as ‘the algorithm made me do it.’” https://t.co/J6luljfMWV

— Wolfgang Schröder (@Faust_III) December 25, 2019

Despite heightened awareness about the potential risks of AI and highly publicized incidents of privacy violations, unintended bias, and other negative outcomes, AI ethics is still an afterthought, a recent McKinsey survey of 2,360 executives shows. While 39% say their companies recognize risk associated with “explainability,” only 21% say they are actively addressing this risk. “At the companies that reportedly do mitigate AI risks, the most frequently reported tactic is conducting internal reviews of AI models.”

There’s good reason to believe that companies are not taking AI ethics seriously because the laws or financial penalties are not there yet to motivate them to do so.

However in order for those laws or fines to be in place, people need to care, society needs to care, and that may be a key shortcoming of the current AI ethics frame.

AI ethics is failing, because it is not focused on the big picture, and the larger ethical problems that face society.

This is the paradox and the contradiction. On the one hand, AI and automation lay claim to profound transformation, and the idea that they will impact all areas of society. Yet on the other hand AI ethics tends to focus on small things that might be tweaked, adjusted, or mitigated, rather than on the connection between AI, power, and the concentration of wealth.

Samsung's Neon AI has an ethics problem, and it's as old as sci-fi canon https://t.co/ydQCBVscgC

— Sergio Reider (@sreiderb) January 13, 2020

We haven't heard much talk from "innovators" about reducing economic inequality by distributing the surplus labor value created by artificial intelligence. Rather, profits grow in the bank accounts of billionaires while economic class disparity increases at a startling rate amid unaddressed AI-related labor displacement anxieties.

Nor have we heard much from technology "leaders" about the use of artificial intelligence to reduce global human rights violations. Rather, we have a crop of technology companies that are using AI to help the US military kill people, to create facial recognition systems used to track Muslim minorities in China, and to help addiction-by-design social websites spread micro-targeted political propaganda. The list goes on.

Debates and discussions regarding ethics are tolerated when the stakes are small, but once the context is expanded, that’s when matters of life and death supposedly outrank any ethical concerns.

Why would we be distracted by ethics when authoritarians are not?

3/5

— Daniel Faggella (@danfaggella) January 13, 2020

Maybe said divide turn out to be a good thing after all, forcing the DoD to focus more overtly on ethics.

...but maybe it'll just end up making the West weaker than the Chinese CCP leadership - who faces no such scruples.https://t.co/voYLyo7Wk9

The reality is that ethics don’t make us weak, but the lack of ethics will certainly make us authoritarian. Rather ethics can and should make us strong. Especially when it comes to resisting authoritarianism.

War is an area where ethics are questionable, but also necessary. The belief that winning must come regardless of the cost, usually ensures that victory will be indistinguishable from defeat.

Scratch the surface of the AI arms race and once again you’ll find that might is right.

The sad truth of the matter. When you really open up the gates for true Machine Learning and AI - the machines will inherently cheat like this, because if the goal is winning, they will do anything to achieve that objective. Because machines don’t need ethics to win. https://t.co/frDwhf4Ve1

— Christopher Kusek (@cxi) January 13, 2020

However the history of society could be regarded as a desire or aspiration to prove or assert that might is not right. That it is possible to get together, make friends, live long, and prosper, all doing so without regularly killing each other. Is that too much to ask?

Maybe that’s why AI ethics have become so popular. We want society. We want a social contract.

I beg people who create AI like this to take at least one ethics class https://t.co/BmXILm6lqj

— Blizz #BLM (@uncleancasuals) January 9, 2020

Which is why I think AI ethics as cobra effect is an interesting lens to examine and understand our current moment in history.

On the one hand I love that more people are recognizing the value of ethics and the importance it can and should play as we attempt to automate everything.

"Until now, AI ethics has felt like something to have a pleasant debate about in the academy. In 2020 we will realise that not taking practical steps to embed them in the way we live will have catastrophic effects." Scorcher of a piece from @hare_brainhttps://t.co/GhnyMaeH6c

— Reema Patel (@Reema__Patel) January 13, 2020

What’s missing at the moment is a rigorous approach to AI ethics that is actionable, measurable and comparable across stakeholders, organisations and countries. There’s little use in asking STEM workers to take a Hippocratic oath, for example, having companies appoint a chief ethics officer or offering organisations a dizzying array of AI-ethics principles and guidelines to implement if we can’t test the efficacy of these ideas.

However on the other hand, we need to ensure these ethical endeavors are substantive and legit. There’s far too much opportunity for sleight of hand and straight up deception.

When choosing to replace human with #AI judgement, don't look at only the percentage accuracy but the pattern of accuracy. What is the accuracy for minorities & anomalies, not just for the average or majority? Otherwise we will amplify and automate #disparity. #diagnosis #ethics

— jutta treviranus (@juttatrevira) January 13, 2020

AI is a powerful tool, and as such it should be used responsibly. However use of the tool is not the only ethical consideration. There’s also the (social) impact that tool has. In his latest book, French philosopher Gaspard Koenig laments the impact of AI on the individual, and the individual’s need to resist the (social) impacts of AI.

"society should be cautious about the power of AI not because it will destroy humanity (as some argue) but because it will erode our capacity for critical judgement"#ai #ArtificialInteligence #technologies #aiethics #neuroscience https://t.co/QTlR2dRdj0

— Columbia University NeuroRights Initiative (@neuro_rights) January 13, 2020

The act of reaching a decision creates a “moral responsibility” that makes it possible to avoid manipulation, as explains Daniel Dennett, a philosopher of cognition. This idea is crucial to help resist simplistic algorithmic recommendations. “I refuse Amazon’s book suggestion not because I won’t like it, but because I am me!” This reflex of pride is intimately linked to a desire for autonomy.

While Yuval Noah Harari, a historian of humanity and technology, argues that AI knows us better than we know ourselves, I believe it prevents us from becoming ourselves. While Harari prides himself on renouncing the individual and abolishing the self in meditation, I propose that we reaffirm the unique beings that we are—or at least, leave open the possibility of becoming one in the future. These metaphysical considerations are indispensable to guide our relationship with technology.

Is the cobra effect of AI ethics the avoidance of real ethical debates that we should be having about AI? That it enables the further development of AI while glossing over the important debates about power, humanity, and social control? What do you think? What role should ethics play? Is ethics even possible in a technological society? Or is this really the end of ethics? #metaviews

Here’s an interview I conducted on the end of ethics in a technological society well over a decade ago: