Automating self fulfilling prophecy

There is a general perception that with sufficient data, and smart enough machine learning, the future can be predicted.

An ongoing and arguably controversial example of this are weather forecasts. As a farmer I’ve become one of those people who pay close attention to weather forecasts, and it is remarkable how often they can be wrong. Or rather they’re constantly changing, often dramatically so.

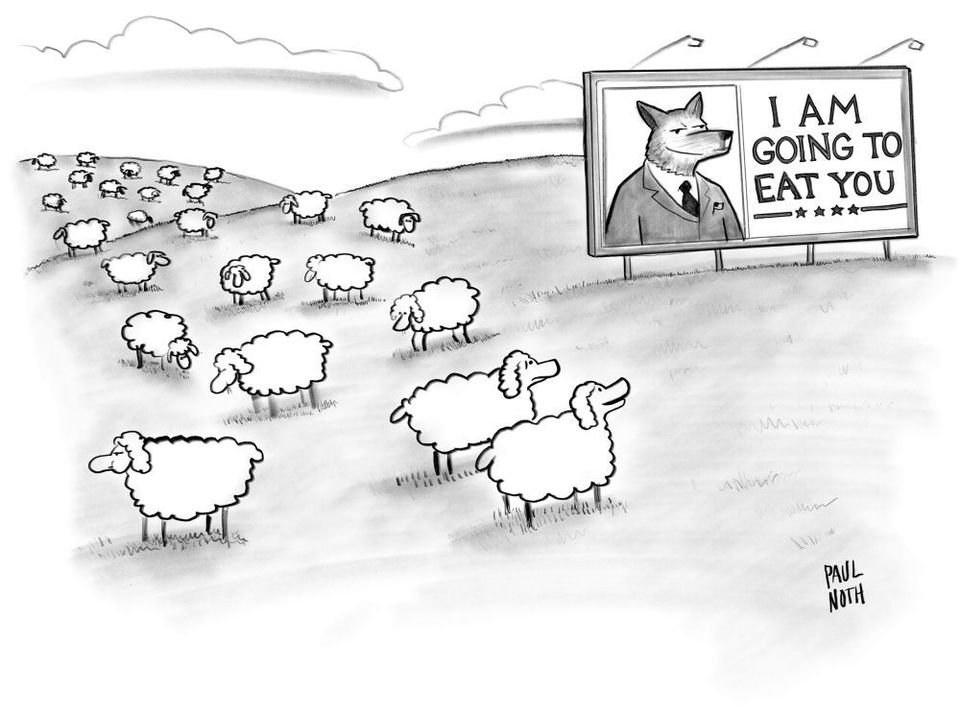

Yet what if our perception of predictions was itself biased in favour of the predictions? That we become so wedded to the prediction, that quite like target fixation, the act of prediction influences the outcome of the prediction.

These are considerations to keep in mind as we reconfigure our society towards optimizing predictive opportunities.

Or optimizing humans as predictive machines.

An essay notes the drift in many precincts -- technologists, psychologists, some philosophers, neuroscientists -- towards seeing the mind as, essentially, a prediction machine. But is that view true? https://t.co/6WfpF0b3je

— Clive Thompson (@pomeranian99) January 31, 2021

tl;dr: Essayist argues "no", and "it's dangerous, too"

Yet Facebook and its peers aren’t the only entities devoting massive resources towards understanding the mechanics of prediction. At precisely the same moment in which the idea of predictive control has risen to dominance within the corporate sphere, it’s also gained a remarkable following within cognitive science. According to an increasingly influential school of neuroscientists, who orient themselves around the idea of the ‘predictive brain’, the essential activity of our most important organ is to produce a constant stream of predictions: predictions about the noises we’ll hear, the sensations we’ll feel, the objects we’ll perceive, the actions we’ll perform and the consequences that will follow. Taken together, these expectations weave the tapestry of our reality – in other words, our guesses about what we’ll see in the world become the world we see. Almost 400 years ago, with the dictum ‘I think, therefore I am,’ René Descartes claimed that cognition was the foundation of the human condition. Today, prediction has taken its place. As the cognitive scientist Anil Seth put it: ‘I predict (myself) therefore I am.’

The technologies of prediction are derived from the methods of planning, which social psychology has demonstrated is full of flaws and biases.

Yet this particular perspective on prediction is an example of brute force tactics (i.e. big data) mixed with self-fulfilling prophesies (i.e. predictive analytics). Put bluntly, if you make enough guesses, you can provide a road map that gets you to the prediction you wanted in the first place.

If software has eaten the world, its predictive engines have digested it, and we are living in the society it spat out.

Questioning algorithmic authority is difficult for most people, as they may not be aware that said algorithmic authority exists in the first place.

Yet how do we question predictions, especially when those predictions are tied to communication environments that nudge consumers (and citizens) to make certain purchases and decisions? How much of this is a prediction, and how much is prescription?

The strength of this association between predictive economics and brain sciences matters, because – if we aren’t careful – it can encourage us to reduce our fellow humans to mere pieces of machinery. Our brains were never computer processors, as useful as it might have been to imagine them that way every now and then. Nor are they literally prediction engines now and, should it come to pass, they will not be quantum computers. Our bodies aren’t empires that shuttle around sentrymen, nor are they corporations that need to make good on their investments. We aren’t fundamentally consumers to be tricked, enemies to be tracked, or subjects to be predicted and controlled. Whether the arena be scientific research or corporate intelligence, it becomes all too easy for us to slip into adversarial and exploitative framings of the human; as Galison wrote, ‘the associations of cybernetics (and the cyborg) with weapons, oppositional tactics, and the black-box conception of human nature do not so simply melt away.’

How we see ourselves matters. As the feminist scholar Donna Haraway explained, science and technology are ‘human achievements in interaction with the world. But the construction of a natural economy according to capitalist relations, and its appropriation for purposes of reproducing domination, is deep.’ Human beings aren’t pieces of technology, no matter how sophisticated. But by talking about ourselves as such, we acquiesce to the corporations and governments that decide to treat us this way.

This is a solid argument in favour of predictive skepticism.

The metaviews of prediction is not whether said prediction is true, but what is the frame that is implicit in the prediction.

In this context, prediction shows that the medium is the message, because the outcome of the prediction is less important than the impact of the prediction itself.

Especially in a society that values predictions, and wants to normalize and invest authority into predictive systems.

The prediction fallacy is an extension of the planning fallacy: “a phenomenon in which predictions about how much time will be needed to complete a future task display an optimism bias and underestimate the time needed.”

Are those shipping vessels circumnavigating Africa wiser than those waiting for the Suez Canal to clear?

Is the optimism bias embedded into our relationship with predictions? Not just the predictions, but the act of predicting?

Like a gambler who refuses to believe that the casino always wins. Or an investor who believes they can outsmart the market. Or a social media user who thinks they can play the algorithm?

If the influencer is the tail that is wagged by the dog, then the dog is the prediction machine that uses advertising as an attempt to monetize the future of your attention (and consumer decision making).

How we understand and potentially resist this machine will influence our future as much if not more than the attempts to predict it.