We may not know what we think we know

The stories we tell ourselves about automation and artificial intelligence are themselves a distraction from the political and economic changes that are underway.

The rapid rate by which artificial intelligence and automated decision making is spreading represents a kind of revolution as to how societies choose to govern themselves. Take a look at DeepIndex, the growing list of areas and applications involving AI.

General Artificial Intelligence is already here, and it's evil, and it's corporations.

— James Bridle (@jamesbridle) September 9, 2019

This boom in machine learning applications is also resulting in a boom for ethicists and lawyers to help adjust existing regimes and moral frameworks to the new world of what is possible. Even the US Department of Defense is seeking an ethicist to help them build killer robots.

Not to fear dear human as the (mostly) young people of Hong Kong are developing tactics and techniques to sabotage and evade the surveillance state.

— Demystification Committee (@dmstfctn) September 7, 2019

Unfortunately these tactics are not helping us escape the fog of war that surrounds the rise of AI. Rather the fire hose of public relations and start-up pitches that provide the chorus for automation hide from the human elements that drive this digital transformation.

“While the robots appear to be autonomous, they’re actually operated by remote workers in Colombia who make $2 an hour, & use GPS & cameras to send the robots instructions every 5-10 seconds.”https://t.co/jnYnCEaABz #SleepDealer

— Frank Pasquale (@FrankPasquale) August 31, 2019

When we think about the impacts of algorithms we should not focus on the bias of the machines, or their ethics, but rather the broader notion of systemic algorithmic harms. Algorithms are not individuals, the biases they exhibit and the ethics that should inform their governance are derived from their use in society as a whole. It is important to then pay attention to how those algorithms reinforce the status quo and potentially automate inequality.

I'm slowly finding my voice, but it's scary work. Thank you @nataliegk1 and researchers @datasociety for your support and encouragement. https://t.co/lKj9gkesFO

— Panic! At the Discourse (@kinjaldave7) May 31, 2019

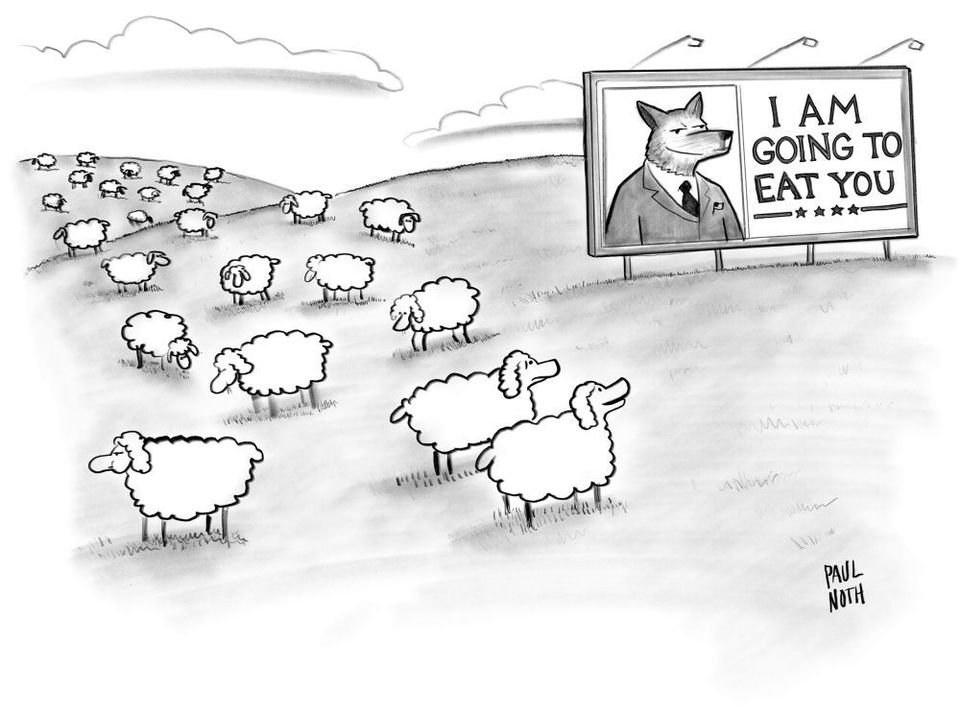

These systemic impacts that an algorithm can have are made explicit when they are then deployed as “individuals” seeking to influence the biases of humans:

A small number of ‘zealot’ bots deployed on a social network can bias how people vote. Published in Nature.

— Tommaso Valletti (@TomValletti) September 5, 2019

Information gerrymandering in social networks skews collective decision-making https://t.co/J4gjuIJCBG

Yet this practice is not solely dependent or based upon automation. Rather it takes advantage of mixed or hybrid systems that involve humans and machines and the ability to combine the two to skew or amplify particular narratives or perspectives.

New report by @BostonJoan & Brian Friedberg for @datasociety highlights the practices media manipulation. It's fantastic - read it. https://t.co/CijofsDvqq

— Shannon McGregor, PhD (@shannimcg) September 5, 2019

And, I'll add, it's implications are even greater, taken what we know about how journalists use social media. 1/x

Researchers are to a certain extent catching up with the practices honed and developed on imageboards like 4chan and later 8chan where media manipulation and algorithmic exploitation flourished. VICE recently aired an interview with the founder of 8chan that provides insight into the platforms that incubated these methods (and why).

Finished watching the piece @elspethreeve produced with me about 8chan for @Vicenews @HBO.

— Fredrick Brennan 🔣🇵🇭✝ (@HW_BEAT_THAT) September 5, 2019

I've gotta say, I thought it was really good. Very even handed.

I'm a bit disappointed they didn't show the footage of Jim's house, but I understand.

Thank you @VICE for the opportunity

Historically this fog of war surrounding the rise of the machines will be regarded as a preface to a larger storm. A storm that further erodes a shared reality towards a virtual reality that is increasingly subjective and imagined.

Just as with AI, the stories we’ve been sharing about virtual reality are also a distraction, and neither indicative of the potential that exists, nor the potential dystopia they could enable.

“I was wrong about space migration,” Timothy Leary told me, referring to his book Exo-Psychology. “Humanity is not going to migrate into outer space. We’re going in there. That’s what’s next. It’s digital acid.”https://t.co/Sb8Nxvmt4D

— douglas rushkoff (@rushkoff) August 27, 2019

Certainly a goal of this newsletter is to attempt to make sense of the terrain, in spite of the fog of war, and create, together, a reality that empowers and educates rather than exploits and deceives.